Have you ever thought about what happens when you press play on an Android device? There is a lot of hidden complexity to playing video on your mobile phone that you may not be aware of. In this post I’ll give you a quick tour through the architecture of our JW Player SDK for Android 2.0. I’ll start with our top-most layer, the HTML5 Player (jwplayer.js) and will then move down the stack all the way down to Android’s Media APIs.

HTML5 Player

The default JW Player 7 Skin

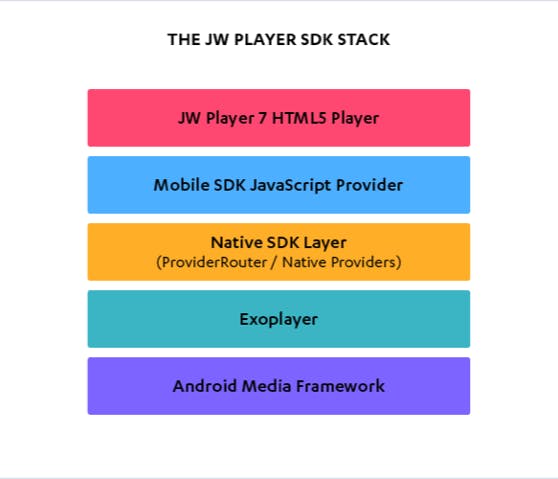

As we explained in our previous blog post, version 2.0 of our SDK is based on a hybrid software design. Our SDK consists of several layers, where the top layer is a WebView containing our flagship JW Player 7 HTML5 player (jwplayer.js). In our SDK’s technology stack the HTML5 player is responsible for rendering the user interface, scheduling VAST advertisements, and rendering external text captions; our hybrid approach gives us the advantage to reuse these features without having to implement them in Java code. For customers, a huge benefit is that they can use their existing JW7 skins in their mobile apps.

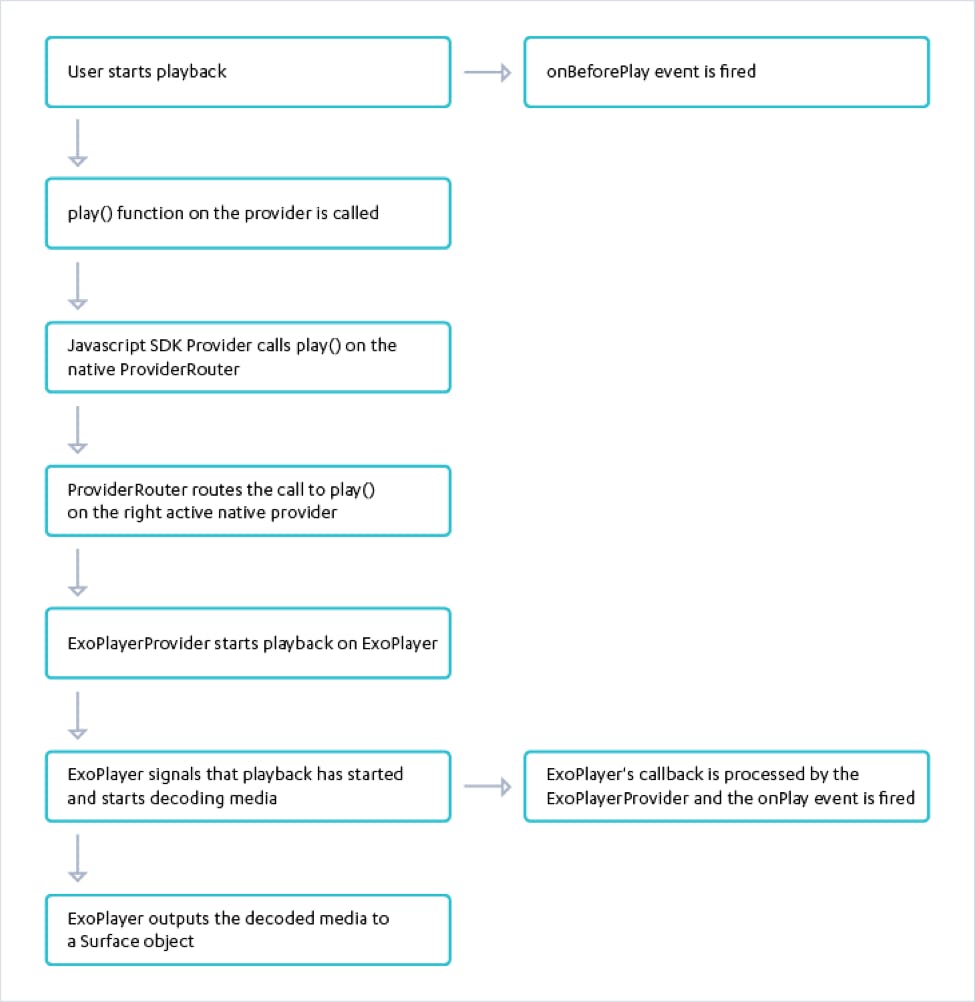

Now, let’s get back to the subject – when you hit play, you are actually clicking on a button which will tell the HTML5 player to call the play() method on the active provider in the HTML5 player. A provider within our architecture is a strategy for implementing media playback: it abstracts away the implementation details for a given type of media which allows the HTML5 player to treat each one the same.

JW Player Android SDK for Android 2.0 Technology stack.

In our Android SDK we use a custom JavaScript provider that acts as glue for our native ExoPlayerProvider (more on that later). Our custom provider has access to a native Java object, our so-called ProviderRouter. The ProviderRouter is a Java class that is injected into our SDK’s WebView using Android’s addJavascriptInterface() method. This method allows us to inject Java objects into the JavaScript context of the WebView and gives the WebView access to the ProviderRouter object’s public methods.

JW Player for Android SDK Native Layer

The ProviderRouter routes calls to provider functions between our native providers. Similar to jwplayer.js, our native SDK also has support for multiple providers. We currently have a provider for playback on the device (ExoPlayerProvider) and a provider for playback on external devices such as Chromecast (CastProvider).

When starting playback, the ProviderRouter routes a call to play() to our ExoPlayerProvider. The ExoPlayerProvider is our abstraction layer on top of ExoPlayer. Within our SDK we use ExoPlayer to perform the actual playback of media files.

ExoPlayer supports Dynamic Adaptive Streaming over HTTP (DASH) which is not supported by Android’s built-in MediaPlayer.

ExoPlayer has support for advanced HLS features, such as correct handling of stream discontinuities and 608 captions.

ExoPlayer gives us the ability to update the player along with our SDK, since ExoPlayer is a library that is included in our SDK, whereas Android’s Default MediaPlayer is only updated with every new Android release.

ExoPlayer has fewer device-specific issues than the Android MediaPlayer.

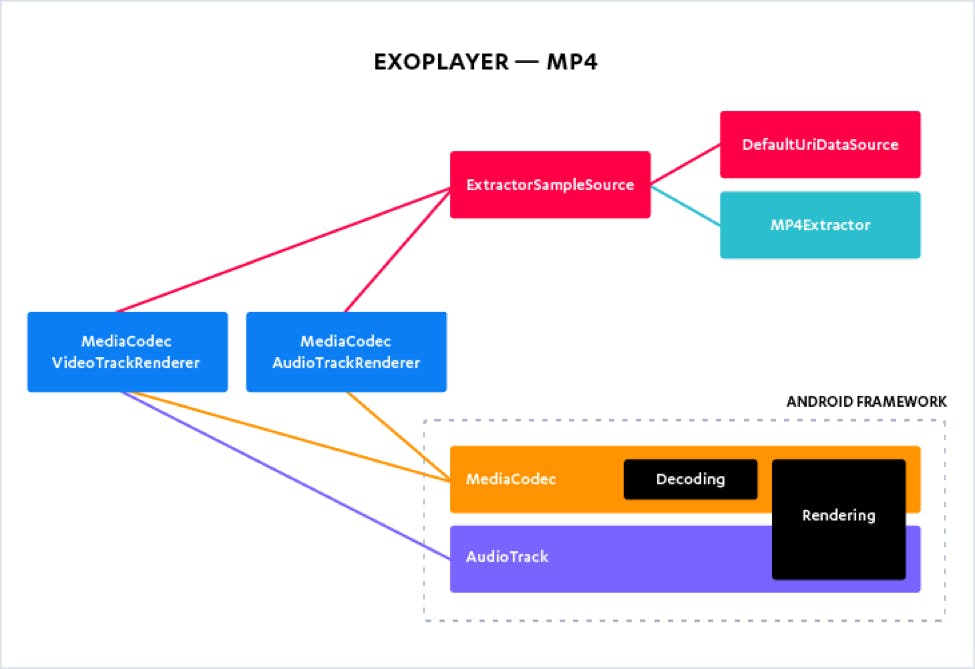

Object model for MP4 playback using ExoPlayer

ExoPlayer – TrackRenderers

To play a specific type of media, such as video, audio, or text, ExoPlayer makes use of TrackRenderers. ExoPlayer provides a default TrackRenderer for video, audio, and text playback (e.g. closed captions). ExoPlayer’s default TrackRenderers for video and audio playback make use of Android’s MediaCodec class to decode individual media samples. These TrackRenderers can handle all audio and video formats supported by a given Android device.

ExoPlayer – SampleSources

In order to instantiate a TrackRenderer, you need to pass in a SampleSource to their constructor.

A SampleSource produces a source of media samples from a given datasource using Extractors, Extractors facilitate extraction of data from a container format. ExoPlayer ships with Extractor implementations for a wide array of media container formats such as MP3, MP4, WebM, etc.

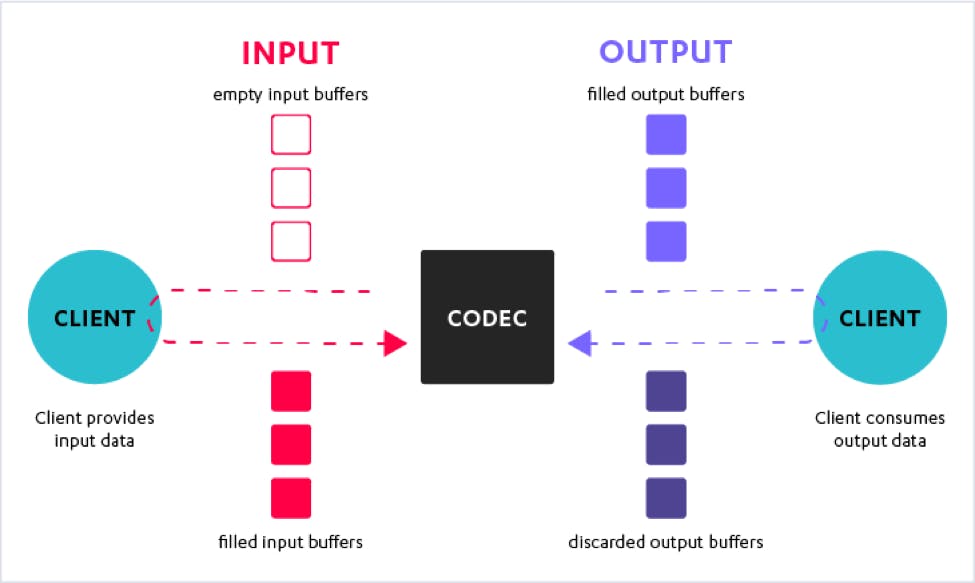

Android Media Framework – MediaCodec

In order to decode the media samples provided by the SampleSources, ExoPlayer’s default TrackRenderers make use of Android’s MediaCodec class. The MediaCodec class is part of Android’s low-level multimedia support infrastructure, which has been added to Android in version 4.1 (API 16). This is also the reason that version 2.0 of our SDK requires at least Android 4.1.

A codec processes encoded media input data to generate output data that can be rendered by your device’s audio and video components.

The basic usage flow of a codec is as follows (when using synchronous processing):

Create and configure a codec.

While there is input:

Request an input buffer.

When the input buffer is ready, fill it with data.

Queue the input buffer on the codec.

Wait until the codec has processed the input buffer, when the input buffer has been processed, let the codec output to the Surface. When this is done, release the buffer back to the codec.

When there is no more input, stop the codec and release it.

Starting with Android Lollipop, the MediaCodec class also supports asynchronous processing using buffers.

In ExoPlayer, these media codecs output their data to a Surface. A Surface is a raw buffer that holds pixels that are being composited to the screen.

The Surface that the Android media codecs output to is displayed on the screen using Android’s SurfaceView class. A SurfaceView provides a dedicated drawing surface embedded inside a view hierarchy. The SurfaceView on which ExoPlayer displays its output is placed below our WebView that contains jwplayer.js in JWPlayerView’s view hierarchy.

Flow chart of “what happens when you press play”.

Conclusion

I hope this post gave you some insight into our SDK’s architecture, and what our SDK actually does when you hit the play button. We think that the architecture of our new JW Player for Android SDK 2.0 is a big leap forward; the transition to ExoPlayer gave us great flexibility in supporting a wide array of media formats, whereas it also greatly reduced device-specific issues. The hybrid software model also made it easier for us to keep feature parity with the HTML5 player. Stay tuned for more updates from the JW Mobile Team.